Your agentic AI pilot impressed every stakeholder in the room. The demo was flawless. Six months later, it’s still sitting in a staging environment, burning cloud budget and going nowhere.

You’re not alone. According to IDC research, 88% of AI agent POCs never graduate to production deployment. For every 33 pilots a company launches, only 4 make it out alive.

Gartner predicts that over 40% of agentic AI projects will be canceled outright by end of 2027, citing escalating expenses, unclear business value, and weak risk controls.

The question isn’t whether agentic AI works. It does. The question is why most organizations can’t get it past the demo.

The Pilot Trap: Why “It Worked in the Demo” Is Dangerous

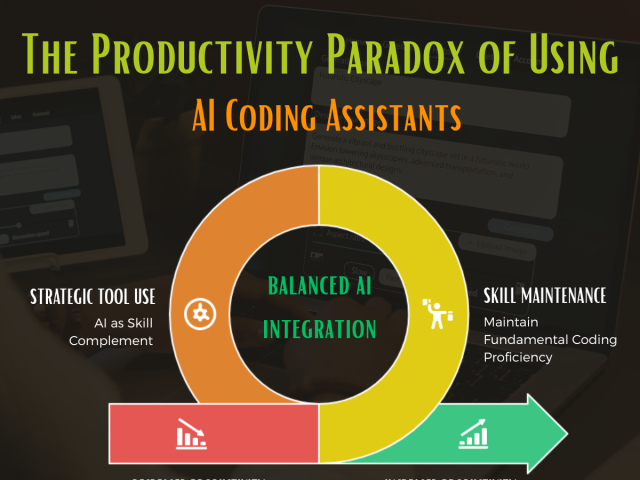

The gap between a working pilot and a production system is wider for agentic AI than for any previous technology wave. MIT’s GenAI Divide report found that 95% of generative AI pilots fail to deliver expected ROI. Not because the models underperform, but because the surrounding infrastructure, governance, and operational readiness weren’t part of the pilot scope.

Where the IDC finding measures how many pilots reach production at all, MIT’s research measures how many deliver measurable financial returns. The gap between “deployed” and “delivering ROI” is where most value leaks.

This isn’t a technology problem. It’s an architecture and organizational problem.

A pilot typically runs on clean, curated data with a single user testing predefined scenarios. Production means messy data, concurrent users, edge cases your team never imagined, and compliance requirements that weren’t relevant during the demo.

When you’re orchestrating multi-agent workflows, a pilot can mask fundamental issues: latency under load, hallucination rates on real-world inputs, and the absence of guardrails for autonomous decision-making.

The financial toll is real. A Gartner survey of 782 I&O leaders found that only 28% of AI use cases in infrastructure and operations fully meet ROI expectations. Of those who experienced failure, 57% cited “expecting too much, too fast” as the root cause.

Factor in technology investment, personnel, and the months your team spent in pilot purgatory, and the bill adds up quickly.

Three Reasons Your Agentic AI Pilot Will Die

After shipping production AI applications and evaluating dozens of agentic AI architectures for clients, we’ve seen the same three failure patterns repeatedly.

1. The Mock API Trap

Nearly half of enterprises cite integration and governance as their top agentic AI barriers (Deloitte, 2026). Your agent needs real-time connectivity to your CRM, ERP, databases, and third-party APIs. In the pilot, you mocked these connections or used a snapshot of production data.

The model orchestration is often the easy part. The hard part is connecting the agent to your actual systems through reliable, secure, production-grade integrations that handle authentication, rate limits, and partial failures.

2. The Governance Vacuum

Fewer than 1 in 5 enterprises we’ve assessed have formal governance frameworks for AI agent behavior. Yet your agentic AI system is making autonomous decisions: classifying documents, routing customer inquiries, generating emails, and prioritizing tasks.

In regulated industries like FinTech and HealthTech, this isn’t just risky. It’s a non-starter. Compliance teams will (rightfully) block production deployment until they see structured output validation, hallucination mitigation, and decision logging baked into the agent architecture.

3. Wrong Problem Selection

In our experience, strategic misalignment in use case selection is the single largest driver of AI project failure. Teams pick the most impressive use case for the pilot, not the most production-viable one. The result: a brilliant demo that requires 18 months of infrastructure work before it can run in production.

The 12% that make it pick bounded problems first. Document classification. Data extraction from structured forms. Internal workflow routing. These problems are contained, measurable, and don’t require your agent to reason about ambiguous situations with high stakes.

What the 12% Do Differently: A Production Playbook

The organizations that move agentic AI from pilot to production share a consistent pattern. It’s not about better models or bigger budgets. It’s about how they structure the deployment.

Start With Constrained Autonomy

Don’t give your agent full autonomy on day one. Deployments that reach production follow a graduated model:

Most production agents live permanently in Phase 2 or Phase 3. That’s not a limitation. That’s good architecture.

Case in point: One client’s document processing agent reduced manual review from 45 minutes to under 4. It has been running in production for 6 months with a 97% accuracy rate in the wellness and hospitality industry. It operates in Phase 3: routine documents processed autonomously, flagged edge cases routed to a human reviewer. No dramatic AI takeover. Just a measurable, durable win that compounds every week.

Build the Data Pipeline Before the Agent

Your agent is only as good as the data it reasons about. Before writing a single line of agent code, validate that your data infrastructure can support real-time retrieval and update.

If you’re using RAG (Retrieval Augmented Generation) with a vector database like Pinecone or pgvector, test your retrieval pipeline with production-scale data, not a 500-document sample.

We’ve seen teams build sophisticated multi-agent systems on LangGraph, only to discover their vector store returns irrelevant results 30% of the time when loaded with real production data. The agent logic was sound. The data pipeline was the bottleneck.

Production Guardrails From Day One

Every production agent needs these safeguards:

These aren’t nice-to-haves. They’re the difference between a production system and a liability.

When Agentic AI Is the Wrong Answer

Not every problem needs an autonomous agent. And saying so is what separates practitioners from vendors.

If your workflow is deterministic and well-defined, a simple automation pipeline (or even a well-designed n8n workflow) will outperform an agentic system at a fraction of the complexity. Agents add value when decisions require reasoning over unstructured data, when context matters, and when the task benefits from adaptive behavior.

Gartner estimates that only about 130 of the thousands of vendors claiming “agentic AI” capabilities are actually delivering genuine agentic solutions. The rest is what analysts term “agent washing”: rebranding existing chatbots and RPA tools. Before you invest in agentic AI, make sure the problem actually requires agency.

We’ve recommended against building AI agents on 3 of our last 10 assessments because the client’s data infrastructure needed work first. That honest assessment saved those clients months and significant budget. It’s a conversation we’d rather have upfront than six months into a pilot that was never going to ship.

The Real First Step

The 12% don’t get lucky. They prepare.

The 88% failure rate isn’t evidence that agentic AI is immature. The technology works. What fails is the assumption that production readiness is something you figure out after the pilot impresses leadership. The organizations that ship treat infrastructure, governance, and use case selection as first-class concerns from week one, not afterthoughts bolted on before launch.

The 12% that ship are the ones capturing that 171% ROI. Not because they built better agents. Because they were ready to run them.

If your pilot is sitting in staging today, the path forward isn’t a better model. It’s a harder look at everything around it. Here’s where that harder look starts.

The Starting Conditions Checklist

What you actually need before your first agentic AI build.

Most AI readiness frameworks ask whether your organization is ready for everything. This one asks a different question: do you have enough to begin?

Three sections, 18 items, one page.

- What you need to start. Six conditions most mid-market leaders already have.

- What a good partner handles for you. The six items that kill 88% of pilots. You don’t need these in-house.

- What we build together. The joint work that turns a pilot into a production system.

Send us your completed checklist and we’ll send back a 2-page diagnostic with the three highest-leverage starting points for your organization and what we would build in the first 30 days. No sales pitch. Senior engineers reviewing your situation directly.

Download the Starting Conditions Checklist →